Canary Releases: Why We Use a Phased Approach to Deployment

In today’s Continuous Integration and Continuous Delivery (CI/CD) world, it’s common to release cloud service updates when repository changes are detected. To do this, there are a variety of deployment strategies that you can use. For example, the highlander approach terminates ‘Version A’ and immediately rolls out ‘Version B’ (“There can be only one!”) whereas a rolling upgrade slowly rolls out ‘Version B’ to replace ‘Version A.’

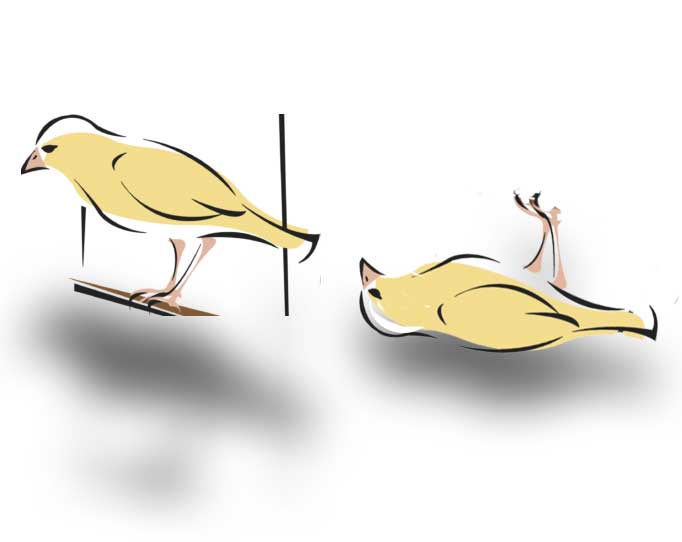

At xMatters, we use a phased approach called canary as our preferred mechanism for rolling out updates. Rather than upgrading everyone simultaneously, we initially release the update to a subset of systems and monitor it to ensure there are no disruptions. If a problem occurs, we roll back those systems to the previous version. If no problems are found, we release the update to all systems.

Overview of Canary Releases

The process begins by deploying a release candidate to our testing infrastructure (we run micro-services, so each service has its own pipeline for initiating a release). During testing, we execute automated regression tests against the new release.

Once the release candidate is certified, it is tagged and deployed into our production environment. Service teams are notified that the release is ready. No systems are using the new release candidate at this point, but the service stack is deployed and ready to accept new traffic.

Step 1: Create a canary schedule

The first stage of the canary release is to separate users into upgrade groups and create a schedule. At each stage of the release, all users in the group are upgraded, and each group is monitored for issues before the next group is upgraded. Although the initial creation of the upgrade groups and schedule is a one-time stage in the process, it’s continually maintained as new users are added.

Step 2: Create a rollback document

The next step is to generate a document containing the service version which each system is currently mapped to. (In practice, all systems should be on the same version, but exceptions are possible.) This document is kept in cloud storage and is used if systems need to be rolled back during the release process.

Step 3: Upgrade users in the first group

The first group to upgrade contains low-risk users. In our systems, this group comprises ‘smoke test’ users and internal users in our non-production environment (which is the environment used for staging and testing changes before a full release).

Step 4: Monitor the canary

This is the most critical part of the deployment process and is specific to the service we’re releasing. Upgrading in a phased manner gives us the opportunity to catch any problems before the full release, avoiding potential impact to the entire user base. This means that we need to continually monitor the partition of users that are upgraded to ensure the deployment has not introduced any negative impact. If we detect any issues that exceed the defined threshold (which is usually a combination of Service Level Indicators (SLIs)), the release is halted automatically.

Eating Our Own Clever Cooking

At xMatters, we use our own software as an integral part of our canary release process. In the final upgrade group, we include specific xMatters alerting instances which have a workflow that is accessible from an API call. If the monitoring software detects a breach of SLIs, it sends a request to this API endpoint with a payload containing the service, version, release team, and alert details.

The workflow parses the received payload and generates a lock file for the canary process and writes it to a cloud storage location being monitored by the release pipeline. When an upgrade group has been processed, the pipeline pauses for a few minutes and polls the cloud storage location for the existence of the lock file. If the file does not exist at the end of the timeout period, it proceeds onto the next upgrade group. If the file is found, the release process is aborted, and users are rolled back to the previous version.

When the lock file has been written, the workflow step sends an alert to the release team’s notifiable devices and provides a link to the lock file (which contains the details of why the release has been aborted). This allows the team to investigate the issue and determine the next steps.

If there are no problems found during the release process, the alerting instances are updated and a second workflow is triggered to alert the release team of the final status of the release.

How Long Does the Canary Release Process Last?

The canary process should be – if you’ll pardon the obvious joke – short-lived, as monitoring and analytics ensure any problems are detected early in the release process. However, it’s possible that an issue exists in a path of the code that’s not covered by QA at the time of the release, and that’s not exercised by all users in the earlier stages. So, keeping the canary process brief between upgrade groups allows you to detect problems quickly. (Though you may be some way into the release before a problem is triggered.)

Conclusion

Using the canary release strategy is a great mechanism to minimize impact on entire user bases by releasing software in phases. However, as this might not be necessary for every release, the decision to run a release as a canary (if it’s not fully automated) depends on the extent of the changes being rolled out. Integrating xMatters into your process allows you to automate the stop and roll back functions of your process and sends critical data back to service release teams about the status of their release.

Finally, the most important part of the release is to ensure that any issues discovered during the deployment process are fed back to the earlier QA stages, ensuring the same problem doesn’t reoccur in future releases.

Open a free xMatters account and see how we manage the quality of our deployments with canary releases.