Continuous Testing Is Crucial for DevOps, But Not Easy

This is part 4 of a blog series exploring common mistakes and best practices in testing. This week’s blog is about continuous testing in production.

As software transitions from a monolithic to a microservice architecture, organizations are adopting DevOps practices to accelerate delivery of features to customers and improve their experience.

Jumping into continuous testing without the right infrastructure, tools, and processes can be a disaster. Continuous testing plays an important role to achieve the fastest quality to market. Continuous testing requires several levels of monitoring with automated triggers, collaboration, and actions. Here’s what is required:

- Automatic Test Triggers to execute tests as software transitions from various stages – development / test / staging / production

- Service Health Monitoring to automate feedback on failures

- Test Result Monitoring to automate feedback on failures

- Identifying Root Cause of Failure and analyzing test results

Let’s take a closer look at each of these requirements.

1. Automated Test Triggers — To enable faster feedback, tests need to be classified in various layers:

Health check — Focus of these test to ensure the services are up and running . Such checks are triggered by various monitoring applications .

Smoke test — Focus of these tests are to verify key business features are operational and functional . Such tests should have short test cycle typically less than 15 min and executed on continuous basis.

Intelligent regression — Subset of the regression test scenarios are triggered based on the code changes with deployments and a full regression is triggered on nightly basis.

Benchmark/Load Test — Focus of these test to measure the performance of the each service and triggered on nightly basis.

Reliability/Chaos Testing — Focus of tests is to measure system behavior while failures are deliberately injected to services. Such tests are triggered on weekly basis to identify key infrastructural / operational issues.

2. Service Health Monitoring — Maintaining the health of services requires:

Automated Alerts — Automation notifies the relevant service team to take the appropriate action required by the failure.

3. Test Results Monitoring — As test are triggered , it’s essential to monitor results and take required steps when there are failures:

Automated Notifications — Notify the appropriate service development teams to take necessary actions such as block release from going to production if a critical defect is introduced.

4. Identifying Root Cause of the failure — Set up a framework to track every request made for automated test runs and how those requests traverse the various distributed services:

Identifying Information — Every request made for automation test runs includes a custom header that includes information like Test Run ID or Test Case ID. After the request is submitted, the response will contain application trace IDs which you can track under test results log.

Every test failure triggers an automated investigation process which does the following:

- Retrieve the test case details to identify the list of component tested in the respective tests

- Identify if any one of the components has changed recently since the last successful test run

- Identify the list of changes and retrieve the metrics for each of those components

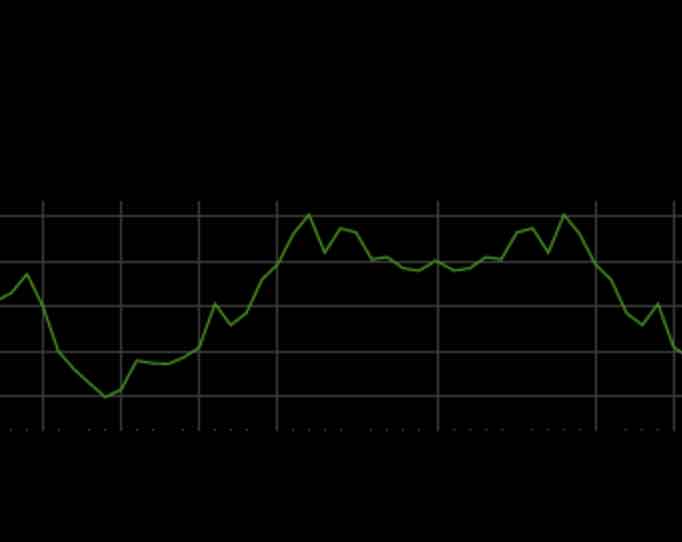

- Correlate the test results based on the changes to identify a pattern

- Once the problem is identified, update the service team owners to take the necessary action to fix the problem